On 25th September 2019, Intel announced the availability of Gen 2 Optane, as well of the coupling of Optane next gen releases with the next Xeon scalable processor series. While little information has transpired (beside codenames, which are cool, but don’t say much), there is speculation that capacities could be doubled.

If you aren’t familiar with this technology yet, Intel Optane DC is Intel’s brand for datacenter-grade 3D XPoint memory (pronounced “Three-D Crosspoint”). It should not be confused with consumer-grade “Intel Optane” and “Intel Optane Memory” products, which are aimed at professional users & technology enthusiasts while taking advantage of the broader Optane brand.

Intel also announced the opening of a new fab in Rio Rancho, New Mexico that will be able to produce Gen 2 Optane wafers. This is an important milestone for Intel since until now Optane wafers are being produced in Lehi, Utah, where the chips are currently produced by Micron. To be more precise, IM Flash (a former Intel-Micron joint venture to develop 3D-XPoint) is manufacturing those, but Micron bought Intel’s remaining stake in the beginning of 2019.

3D XPoint : A Brief Recapitulation

3D XPoint is the best-in-class flash memory technology, combining ultra-low latency and high media endurance. 3D XPoint is different from 3D NAND, the ubiquitous flash media that powers most of our devices nowadays.

A quick look at 3D NAND

Let’s first recapitulate what 3D NAND is, so that we can see why 3D XPoint is different. 3D NAND has a skyscraper-like three-dimensional structure, made of multiple layers of NAND memory stacked on top of each other.

One of the challenges of 3D NAND is that as density increases, reliability decreases. From SLC (Single Level Cell, or one level of memory charge per cell) to QLC (Quadruple Level Cell), the amount of memory states per cell increases four-fold. The more charge levels a cell has, the harder it becomes to ensure charge accuracy, and the more side effects writes have, which has an impact on the media durability. This would be too long to cover here, so we’ll keep going on with 3D XPoint.

What 3D XPoint is made of

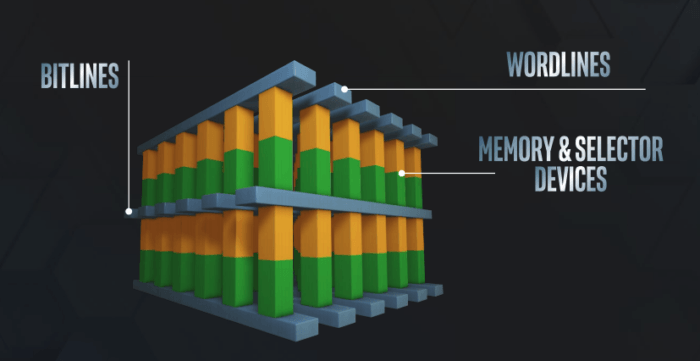

3D XPoint also uses a three-dimensional array structure packing 128 billions individual memory cells, with each cell storing a single bit of data.

Writing data to 3D XPoint cells is done individually, at the bit level (for comparison, 3D NAND is written in memory pages of a certain amount of KBs) by varying the voltage level applied to the selector (see picture below).

The use of a selector (which changes the physical state of the material) eliminates the need for transistors, freeing up space in the die to add more cells. Silicon industry experts claim (reference 1, 2, 3 and 4) that 3D XPoint is nothing else than Phase-Change Memory. Intel and Micron have of course claimed that 3D XPoint is a unique proprietary technology that they do not intend to make public. If you are geeky enough, you can check the references and make your own mind.

It’s worth mentioning that 3D XPoint currently only has 2 layers. Future versions of 3D XPoint will see 4 layers, which will translate in potentially doubled capacity from a storage perspective. For comparison, both TLC and QLC 3D NAND are already beyond 64 layers (Intel announced they are developing 144-layer QLC 3D NAND at that same event).

Intel guarantees the media against any failures due to cell wear during the warranty time of its 3D XPoint devices, which implies that the media must be extremely durable.

Intel Optane DC Products

Optane DC comes in two flavors: SSD and Persistent Memory, two different ways of consuming Optane DC.

Intel Optane DC SSD

SSD is self-explanatory: it’s an implementation of 3D XPoint that can be consumed as ultra-low latency storage for Tier-0 workloads. The reality though is that Optane DC flash comes at limited capacities and is still expensive compared to TLC (Triple Level Cell) 3D NAND flash memory.

High cost and lower capacity currently corner the use of Optane DC SSD flash primarily as a storage cache tier (used to cache read & writes).

Intel Optane DC Persistent Memory

This product is a different kind of breed. While still using the same 3D XPoint technology, Optane DC Persistent Memory has the form factor of a DIMM (Dual In-Line Memory Module).

Furthermore, because of this difference, Optane DC Persistent Memory has a dependency on the processor and its memory controller. Currently, only Intel Cascade Lake processors are able to use Optane.

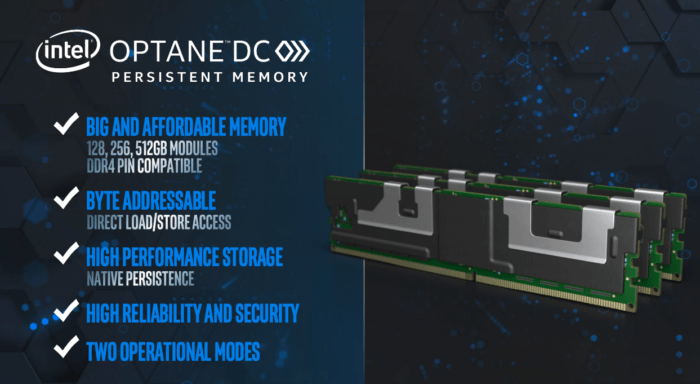

Albeit small in comparison to SSD drives, Optane DC Persistent Memory modules come in sizes of 128, 256 and 512 GB, which is a big game changer compared to the smaller size of DRAM modules that; sizes up to 32GB per module are most common in datacenter deployment today.

Contrarily to its SSD counterpart (which is a hell of a fast & durable SSD drive), Optane DC Persistent Memory behaves slightly differently. It has two operating modes: Memory Mode and App Direct Mode.

Note that it is also possible to operate in Dual Mode, i.e. a combination of Memory Mode and App Direct Mode, but for article length reasons we will not cover Dual Mode.

Memory Mode

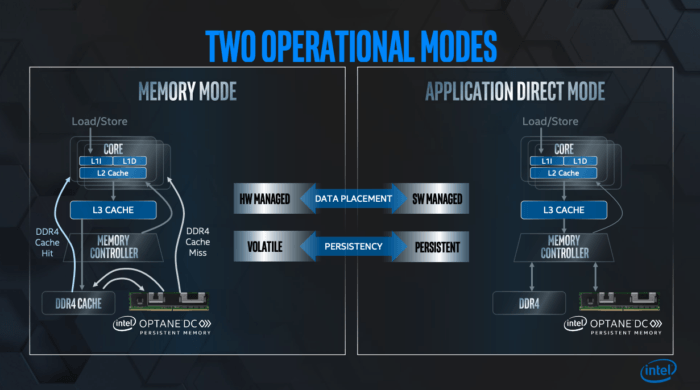

In Memory Mode, the system DRAM and Optane modules are combined together and appear to the operating system as a single big memory pool. This is achieved by using the DRAM inside the system as a fast memory cache and using Optane (fast, but still slower than DRAM) as the main memory pool. Caching operations are handled by the CPU memory controller.

Be warned however that despite Optane being non-volatile media, Memory Mode is a volatile mode, because it relies on DRAM for memory caching.

Memory Mode allows more memory density at a lower price point which makes sense for large virtualization hosts and legacy applications: the system sees more memory, but it costs significantly less.

Indeed, the use of DRAM as a cache means that applications which often retrieve the same data sets will be favored over applications which read a lot of unique / random content, so this aspect must be factored in when evaluating whether to use of Optane DC Persistent Memory or not.

App Direct Mode

In App Direct Mode, Optane can be used as persistent memory. In this mode, the DIMM module becomes addressable just as memory, via non-volatile memory pages.

A prerequisite for App Direct mode is the use of an operating system / hypervisor that is persistent memory-aware. The next prerequisite is having persistent memory-aware applications that can take advantage of this mode. Non-aware applications will not be able to leverage App Direct Mode, it is therefore key to carefully weigh whether to deploy Optane DC in Memory Mode or App Direct Mode.

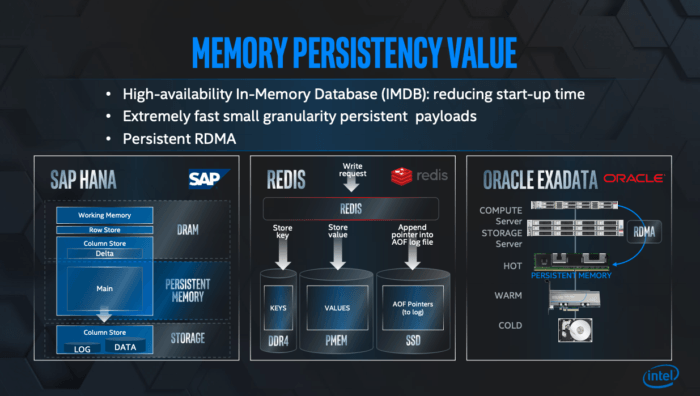

PM-aware applications like SAP HANA, Redis, and Cassandra can vastly benefit from persistent memory, as Intel recently highlighted in Seoul during their announcement.

App Direct Mode is best suited for in-memory applications (databases, analytics) which require very large amounts of DRAM to function adequately. App Direct Mode allows these apps to consume very large amounts of memory at almost half of the DRAM cost.

And the current generation of Cascade Lake processors supports up to 4.5 TB of Optane DC Persistent Memory per physical processor (9 TB for two-socket systems, 18 TB for four-socket systems), making ample capacity available at a better price point.

Conclusion

Optane DC Persistent Memory has the potential to become transformational, especially in App Direct Mode. For years, computers and applications have operated on the premise of low amounts of fast & expensive RAM, coupled with cheaper and larger amounts of storage. This has led to choices in term of systems design, application design and data flows.

What App Direct Mode does is present applications with a very fast and durable media that can be used to store data directly close to the CPU, without having to transport data to an external media. The availability of Optane allows the industry to move from theory to practice and allows application developers to innovate.

This is not the demise of traditional storage, but we can expect storage flows & principles to change in the coming years.

We can only foresee how applications will be designed in a decade from now, but from where we are, it appears to be glorious. At least from a persistent memory usage perspective.

Persistent Memory: Further Reading

- Intel has a dedicated page about Persistent Memory Programming and PMDK (Persistent Memory Development Kit)

- Along with proposing NVMe and Persistent Memory standards, the SNIA has developed a programming model specification for Persistent Memory which is worth exploring.